Technology

Why Big Business Should Proactively Build for Privacy

Published

6 years agoon

This article explores the rise of Privacy by Design (PbD) from the basic framework, to its inclusion in the GDPR, to its application in business practices and infrastructure especially in the wake of Artificial Intelligence.

We had the pleasure of sitting down with Dr. Ann Cavoukian, former 3-Term Privacy Commissioner of Ontario, and currently Distinguished Expert-in-Residence, leading the Privacy by Design Centre of Excellence at Ryerson University in Toronto, Canada to discuss this massive shift that will upend current business practices. We’ve also sought responses from top execs from AI start-ups, and enterprise to address the current hurdles and future business implications of Privacy by Design. This article includes contributions from Scott Bennet, a colleague researching privacy and GDPR implications on emerging technology and current business practices.

We had the pleasure of sitting down with Dr. Ann Cavoukian, former 3-Term Privacy Commissioner of Ontario, and currently Distinguished Expert-in-Residence, leading the Privacy by Design Centre of Excellence at Ryerson University in Toronto, Canada to discuss this massive shift that will upend current business practices. We’ve also sought responses from top execs from AI start-ups, and enterprise to address the current hurdles and future business implications of Privacy by Design. This article includes contributions from Scott Bennet, a colleague researching privacy and GDPR implications on emerging technology and current business practices.

I call myself an anti-marketer, especially these days. My background has predominantly come from database marketing and the contextualization of data to make more informed decisions to effectively sell people more stuff. The data that I saw, whether it be in banking, loyalty programs, advertising and social platforms — user transactions, digital behaviour, interactions, conversations, profiles — were sewn together to create narratives about individuals and groups, their propensities, their intents and their potential risk to the business.

While it was an established practice to analyze this information in the way that we did, the benefit was largely to businesses and to the detriment of our customers. How we depicted people was based on the data they created, based on our own assumptions that, in turn, informed the analysis and ultimately, created the rules which governed the data and the decisions. Some of these rules unknowingly were baked in unintended bias from experience and factors that perpetuated claims of a specific cluster or population.

While for many years I did not question the methods we used to understand and define audiences, it’s clear that business remained largely unchecked, having used this information freely with little accountability and legal consequence.

As data becomes more paramount and as AI analyzes and surfaces meaning at greater speeds, the danger of perpetuating these biases becomes even more serious and will inflict greater societal divisions if measures are not put in place and relentlessly enforced.

Recently, I met my maker. Call it atonement for the many years I manipulated data as a marketer. We had the honour of talking Privacy with an individual I had admired for years. Dr. Ann Cavoukian, in my view, will drive a discussion across industry that will make business stand up and listen.

Remember when Canada’s Privacy Commissioner took on Facebook?

Ann Cavoukian has been an instrumental force in spreading awareness of Privacy, which brought her front in centre on the world stage, pitted directly against Facebook in 2008. Back then the federal Privacy Commissioner alleged that 22 practices violated the Canadian Personal Information Protection and Electronic Documents Act (PIPEDA). This eventually led to an FTC settlement with Facebook that mandated an increased transparency with its users, requiring their explicit consent before “enacting changes that override their privacy settings.”

Ann Cavoukian is a household name in technology and business. As a three-term Privacy Commissioner of Ontario, Canada, she has jettisoned the privacy discussion for a few decades. Today that discussion has reached a fever pitch as the EU General Data Protection and Regulation (GDPR), which came into effect May 25, 2018, includes Cavoukian’s long-advocated creation, Privacy by Design (PbD). This will raise the bar dramatically and any company or platform who does business with the EU, will need to comply with these standards. At the heart of GDPR are these guiding principles when collecting, storing and processing personal consumer information:

- Lawfulness, fairness and transparency

- Purpose limitation

- Data minimization

- Accuracy

- Storage limitation

- Integrity and confidentiality (security)

- Accountability

Privacy by Design’s premise is to proactively embed privacy at every stage in the creation of new products or services in a way that’s fair and ethical. Cavoukian argues that by implementing PbD, companies would, in effect, be well on their way to complying with the GDPR.

What Makes this Moment Ripe for Privacy by Design?

In the 90’s the web was growing exponentially. Commerce, online applications, and platforms were introducing a new era that would dramatically change business and society. Ann Cavoukian, at this time, was in her first term as Privacy Commissioner of Ontario. She witnessed this phenomenon and was concerned it was going to grow dramatically, and in an era of ubiquitous computing, increasing online connectivity and massive social media, she surmised that privacy needed to be developed as a model of prevention, not one which simply “asked for forgiveness later.”

Imagine going to your doctor, and he tells you that you have some signs of cancer developing and says, “We’ll see if it gets worse and if it does, we’ll send you for some chemo”. What an unthinkable proposition! I want it to be equally unthinkable that you would let privacy harms develop and just wait for the breach, as opposed to preventing them from occurring. That’s what started PbD.

In 2010, at the International Conference of Data Protection Authorities and Privacy Commissioners in Europe, Cavoukian advanced the resolution that PbD should complement regulatory compliance, to mitigate the potential harms. It was unanimously passed. The reason?

Everyone saw this was just the tip of the iceberg in identifying the privacy harms, and we were unable to address all the data breaches and privacy harms that were evading our detection because the sophistication of perpetrators meant that the majority of breaches were remaining largely unknown, unchallenged and unregulated. As a result, PbD became a complement to the current privacy regulation, which was no longer sustainable as the sole method of ensuring future privacy.

These days the issue of data security has gotten equal, if not more, airplay. Cavoukian argues:

When you have an increase in terrorist incidents like San Bernadino, Charlie Hebdo attacks in Paris, and in Manchester, the pendulum spins right back to: Forget about privacy — we need security. Of course we need security — but not to the exclusion of privacy!

I always say that Privacy is all about control — personal control relating to the uses of your own data. It’s not about secrecy. It drives me crazy when people say ‘Well, if you have nothing to hide, what’s the problem?’ The problem is that’s NOT what freedom is about. Freedom means YOU get to decide, as a law-abiding citizen, what data you want to disclose and to whom — to the government, to companies, to your employer.

Pew Research conducted an Internet Study post-Snowden to get a consumer pulse on individual privacy. Key findings cited:

There is widespread concern about surveillance by both government and business:

• 91% of adults agreed that consumers had lost control over their personal information;

• 80% of social network users are concerned about third parties accessing their data;

• 80% of adults agreed that Americans should be concerned about government surveillance.

Context is Key:

And while there are those who understand they are trading their information for an expectation of value, they should be fully informed of how that value is extracted from their data. Cavoukian cautions:

Privacy is not a religion. If you want to give away your information, be my guest, as long as YOU make the decision to do that. Context is key. What’s sensitive to me may be meaningless to you and vice versa… At social gatherings, even my doctors won’t admit they’re my doctors! That’s how much they protect my privacy. That is truly wonderful! They go to great lengths to protect your personal health information.

The importance of selling the need for privacy includes persistent education. Unless people have been personally affected, many don’t make the connection. Does the average person know the implications of IoT devices picking up the “sweet nothings” they’re saying to their spouse or their children? When they realize it, they usually vehemently object.

Context surfaces the importance of choice. It is no longer an all-or-nothing game subsumed under a company’s terms and conditions where one click, “Accept” automatically gives full permission. Those days are over.

And while some can object to analyzing and contextualization for insurance purposes, they may allow their personal health history to be included in an anonymized manner for research to understand cancers endemic to their particular region.

Context is a matter of choice; freedom of choice is essential to preserving our freedom.

Privacy Does Not Equal Secrecy

Cavoukian emphasizes that privacy is not about having something to hide. Everyone has spheres of personal information that are very sensitive to them, which they may or may not wish to disclose them.

You must have the choice. You have to be the one to make the decision. That’s why the issue of personal control is so important.

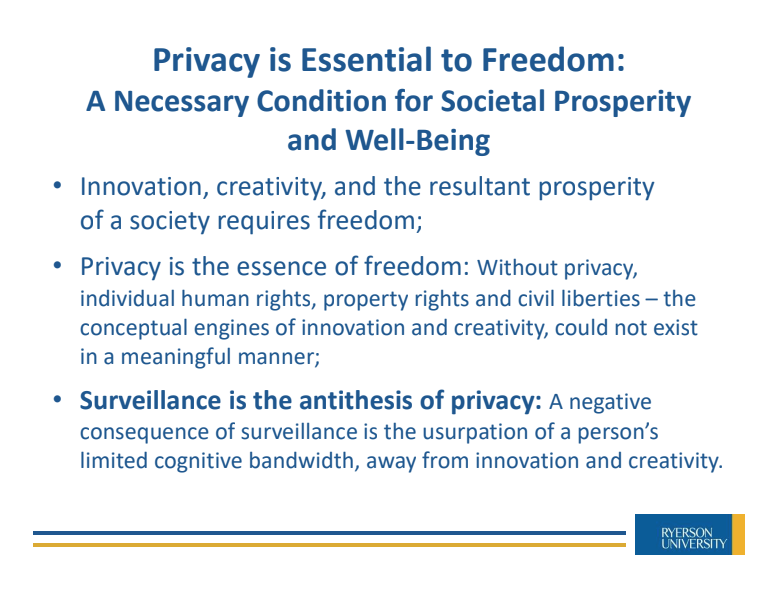

I extracted this slide from Ann Cavoukian’s recent presentation:

The <ahref=”https://www.wired.co.uk/article/china-social-credit” target=”_blank” rel=”nofollow noopener noreferrer noopener”>Chinese Social Credit System was created to develop more transparency and improve trustworthiness among its citizens. It’s a dystopia we do not want. China is a clear surveillance society that contradicts free society’s values. Cavoukian crystalizes the notion that privacy forms the foundation of our freedom. If you value freedom, you value privacy.

Look at Germany. It’s no accident that Germany is the leading privacy and data protection country in the world. It’s no accident they had to endure the abuses of the Third Reich and the complete cessation of their privacy and their freedom. And when that ended, they said, ‘Never again will we allow the state to strip us of our privacy — of our freedom!’ And they have literally stood by that.

Post-Snowden, I wrote this: The NSA, Privacy and the Blatant Realization: Nothing You Do Online is Private and referenced a paragraph written by Writynga in his response to Zuckerberg’s view at the time 2012 that privacy was no longer a social norm:

We like to say that we grew up with the Internet, thus we think that the Internet is all grown up. But it’s not. What is intimacy without privacy? What is a democracy without privacy?…Technology makes people stupid. It can blind you to what your underlying values are and need to be. Are we really willing to give away our constitutional and civil liberties that we fought so hard for? People shed blood for this, to not live in a surveillance society. We looked at the Stasi and said, ‘That’s not us.

The will of the people has demanded more transparency.

But we don’t want a state of surveillance that eerily feels like we’re living in a police state. There has to be a balance between ensuring the security of the nation and the containment of our civil liberties.

People will have Full Transparency… Full Control… Anytime

Since the passing of Privacy by Design (PbD) as an international standard in 2010 to complement privacy regulation, PbD has been translated into 40 languages. The approach has been modified to include the premise that efforts to ensure individual privacy can be achieved while developing consumer trust and improved revenue opportunities for business within a Positive Sum paradigm. Cavoukian is convinced this is the practical way forward for business:

We can have privacy and meet business interests, security and public safety … it can’t be an either/or proposition. I think it’s the best way to proceed, in a positive-sum, win/win manner, thereby enabling all parties to gain.

Privacy by Design’s Foundational Principles include:

- Proactive not Reactive: preventive not remedial

- Privacy as the default setting

- Privacy embedded into design

- Full functionality: positive sum, not zero-sum

- End-to-end security: full lifecycle protection

- Visibility and transparency: keep it open

- Respect for user privacy: keep it user-centric

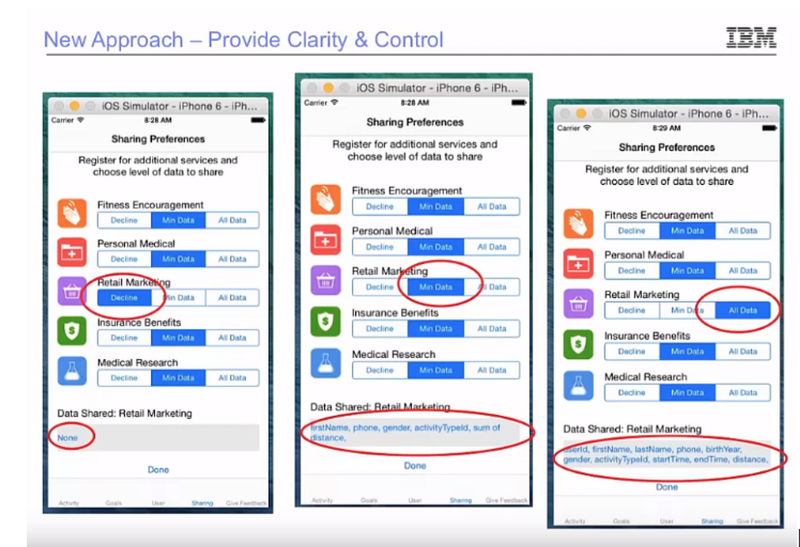

Cavoukian contends that Principle #2, Privacy by Default is critical and, of all the foundational principles, is the hardest one since it demands the most investment and effort: with explicit requirements that change how the data is collected, used and disclosed, and will result in data policy and process alterations including new user-centric privacy controls.

Article 21 also states individuals have the “right to object” to the processing of their personal information at any time. This includes for use in direct marketing and profiling:

“The controller shall no longer process the personal data unless the controller demonstrates compelling legitimate grounds for the processing which override the interests, rights, and freedoms of the data subject.”

The business must be more explicit and go much further, beyond the traditional disclosure and terms of service. Purpose specification and use limitation require organizations to be explicit about the information it requires, for what purpose, and must elicit consent specifically for that purpose and that purpose alone. Later on, if a secondary use transpires, the organization will require the user consent once again. If disclosure is key to transparency, businesses will need to find a way to do this while mitigating consent fatigue.

Article 17 suggests a much stronger user right that belies current business practices: The Right to Erasure (“the right to be forgotten”)

The data subject shall have the right to obtain from the controller the erasure of personal data concerning him or her without undue delay and the controller shall have the obligation to erase personal data without undue delay.

While this statute will have exceptions like data that establishes the data subject as an entity: through health records and banking information, behaviour, transactions, future analysis in profiling, and contextual models are fair game for “the right to be forgotten.” The advent of the GDPR has provided business a glimpse of the potential impacts where companies experienced customer record volumes drop an average of 20% for customers who did not explicitly opt-in.

This is a truly user-centric system. Make no mistake, Privacy by Design will challenge current practices and upend current infrastructures.

This privacy UI simulation (IBM: Journey to Compliance) displays how potential user controls will work in real time and the extent to which the user can grant consent based on different contexts. This level of user access will require a data repository to purge user information, but must be configured with the flexibility to redeploy the data into systems down the road, should the user decide to revert.

Can Privacy by Design Create a Positive-Sum Existence for Business?

If you had asked me a year ago, I would have argued that Privacy by Design

is not realistic for business adoption, let alone, acceptance. It will will upend process, structure and policy. However, within the mandate of GDPR this is an inevitability.

We asked Ann Cavoukian to consider business practices today. Both Google and Facebook have received enormous fines in wake of the GDPR to the tune of $9.3 billion. Because of the recent Cambridge Analytica data breach, Facebook is investing millions in tools and resources to minimize future occurrences. It’s recent Q2 stock plummet took the market by surprise but for Zuckerberg, he made it clear they would be taking a performance hit for a few quarters in order to improve the platform for its users… not for its shareholders. While they are a beacon of how companies should behave, this clear “ask forgiveness later” model negated any appearance that this strategy was nothing less than altruistic.

Emily Sharpe, Privacy Policy Manager at Facebook contends that in preparation for the GDPR, they paid particular attention to the Article 29 Working Party’s Transparency Guidance:

We have prepared for the past 18 months to ensure we meet the requirements of the GDPR. We have made our policies clearer, our privacy settings easier to find and introduced better tools for people to access, download, and delete their information. In the run up to GDPR we asked people to review key privacy information which was written in plain language, as well as make choices on three important topics. Our approach complies with the law, follows recommendations from privacy and design experts, and is designed to help people understand how the technology works and their choices.

Cavoukian pointed to a study by IBM with the Ponemon Institutethat brought awareness to the cost of data breaches: It reports that the global average cost of a data breach is up 6.4 percent over the previous year to $3.86 million per incident. On a per record basis, the average cost for each record lost rose by 4.8% to $148. As Cavoukian points out, these costs will continue to rise if you maintain Personally Identifiable Information (PII) at rest.

The PbD solution requires a full end-to-end solution which includes both privacy and security:

- IT systems;

- accountable business practices; and

- networked infrastructure.

How Do You Address the Advertisers Who Successfully Monetize Data Today?

What do you say to advertisers and publishing platforms who play in this $560-billion industry? We can’t stop progress. The more data out there, the more demand from willing buyers to extract meaning from it. On the other hand, given the fallout from Facebook, some advertisers have been grey or black listed from advertising on the platform because of questionable practices or content. The platform changes have also significantly curbed ad reach opportunities for current advertisers. This domino effect is now compounded with mandates from GDPR to garner explicit consent and create greater transparency of data use. Ann Cavoukian said this:

The value of data is enormous. I’m sorry but advertising companies can’t assume they can do anything they want with people’s data anymore. I sympathize with them. I really do; their business model will change dramatically. And that is hard to take so I genuinely feel bad for them. But my advice is: that business model is dying so you have to find a way to transform this so you involve your customers, engage them in a consensual model where benefits will accrue to customers as well. Context is key. Give individuals the choice to control their information and gain their consent to exchange it for something they value from you.

Mary Meeker’s “Paradox of Privacy” points to the consumer’s increasing demand for products and services that are faster, easy, convenient and affordable. This requires systems that can leverage personal information to make this a reality for the consumer. Increased customization is the expectation but brings with it increased business risk. As long as current business practices persist, according to Cavoukian, it leaves their business vulnerable to, as we’ve witnessed, incessant data breaches and cyber attacks. Equifax and Target are two cases in point.

Communication with the data subject needs to be a win/win (positive sum). Can the business provide the necessary value, while respecting the choices dictated by the individual? When AI becomes more pervasive this will become even more challenging as streaming data will require more real-time interfaces and applications that allow access and individual configuration of data types across various contexts and vertical uses.

I asked a few executives from various data start-ups and from established enterprise businesses, who have had considerable business to consumer experience from advertising to social technology to network platforms, to weigh in on the privacy debate:

Josh Sutton, CEO of Agorai, was also former Global Head for Data and AI at Publicis.Sapient. In an advertising industry which drives hundreds of millions in revenue, the quest to build consumer relevance comes at a cost. This proliferates as more companies look to artificial intelligence to drive precision:

Data is clearly one of the most valuable assets in the world today — especially with the growing importance of artificial intelligence (AI) which relies on massive amounts of data. Data privacy needs to be incorporated into the fabric of how these technologies work in order for society to get the most benefit from AI. To me, data privacy means having the ability to control when and why data that you own is used — not the ability to keep it secret which is a far easier task. For that to happen, there needs to be open and transparent marketplaces where people and companies can sell data that they create, as well as a consistent set of regulations for how companies can use data.

Dr. Nitin Mayande, PhD, Chief Scientist of Tellagence, and former Data Scientist at Nike concurs with Josh Sutton. Nitin had been studying social network behavior for years and understands the need to transform current approaches:

Sooner or later I envision a data marketplace — a supply side and a demand side. Today, companies leverage data at the user’s expense and monetize it. The end user does not experience any real economic benefit. Imagine a time when data becomes so valuable the individual can have full control and become the purveyor of his/her own information.

For Dana Toering, Chief Revenue Officer at Yroo and former Managing Director at Adobe Advertising Cloud, his career saw the emergence of ad platforms, which heavily relied on treasure troves of data to gain increasing granularity for ad targeting:

As an entire ecosystem I feel we are just now coming to terms with the evolution of value exchange that was established between end users and digital publishers and software developers starting in October 1994 when Hotwired.com ran the internet’s first banner ad. The monetization of audiences through advertising and wide-spread data harvesting of the same audiences in exchange for ‘free’ content or software has enabled the meteoric growth of the internet and the businesses that are built around it but has also enabled massive amounts of fraud and nefarious activity. Thankfully we are at a tipping point where corporations/brands and users alike are taking back data ownership and demanding transparency, as well as consent and accountability. Defining and managing the core tenets of this value exchange will become even more important (and complex) in the future with the rise of new technologies and associated tools. So the time is now to get it right so both businesses and users can benefit long term.

I have had curious discussions with Dr. Sukant Khurana, Scientist heading the Artificial Intelligence, Data Science, and Neurophysiology laboratory at CSIR-CDRI, India. As an entrepreneur also working on various disruptive projects, he had this to say, echoing the above sentiments:

The debate between privacy and security is a misleading one, as the kind and amount of data shared with private companies and the government need not and should not be the same. AI has been vilified in data privacy issues but the same technology (especially the upcoming metalearning approaches) can be used to ensure safety while preventing unwanted marketing and surveillance. If the monitoring tools (by design) were made incapable of reporting the data to authorities, unless there was a clear security threat, such situation would be like having nearly perfect privacy. It is technologically possible. Also, we need to merge privacy with profits, such that by and large, companies are not at odds with the regulatory authorities. This means there needs to be smarter media and social platforms, which present more choices for data sharing, choices that are acceptable between the end customer and the platforms.

Alfredo C. Tan, Industry Professor, DeGroote School of Business at McMaster University has extensive experience on B2C advertising platforms, and understands the need for fair exchange, baked in trust:

If there was better control and understanding of how personal data is being used, I believe people would be willing to be more open. The balance is ensuring there is a fair value exchange taking place. In exchange for my data, my experiences become better, if not in the present but in the future. And as long as this is a trusted relationship, and people understand the value exchange then people are open to sharing more and more information. I am happy that Facebook, Amazon, and other platforms are aware that I am a male between 35–45 with specific interests in travel and pets, but no interest in hockey or skateboarding. Or that based on certain movies I watch, Netflix makes recommendation on what other types of content I would be interested in to keep me more entertained. And maybe that data is used elsewhere, with my permission to make experiences better on other platforms. The battle for data in an increasingly competitive consumer landscape is to increase engagement using personalized insight they have gleaned about their customers to ultimately create better experiences. I am certain many people do not want to go back to the anonymous web where all of us are treated largely the same and there was no differentiation in the experience.

Everyone agrees the regression to anonymity is not plausible nor tenable.

Privacy, Security, Trust and Sustainability

This is the future and it’s critical that business and government develop a stance and embrace a different way of thinking. As AI becomes more pervasive, the black box of algorithms will mandate business to develop systems and policies to be vigilant against the potential harms. Cavoukian understands it’s an uphill battle:

When I have these conversations with CEOs, at first they think I’m anti-business and all I want to do is shut them down. It’s the farthest thing from my mind. You have to have businesses operating in a way that will attract customers AND keep their business models operating. That’s the view I think you should take. It has to be a win/win for all parties.

Do you have a data map? I always start there. You need to map how the data flows throughout your organization and determine where you need additional consent. Follow the flow within your organization. This will identify any gaps that may need fixing.

TRUST: it takes years to build… and days to lose…

Perhaps this is the view that companies should take. Ann Cavoukian maintains that those who have implemented PbD say it builds enormous trust. When you have a trusted business relationship with your customers, they’re happy to give you additional consent down the road. They just don’t want the information flowing out to third parties unknown.

I tell companies if you do PbD, shout it from the rooftops. Lead with it. Tell your customers the lengths you’re going to to protect their privacy, and the respect you have for them. They will thank you in so many ways. You’ll gain their continued loyalty, and you’ll attract new opportunity.

I say to companies who see privacy as a negative, saying that it stifles creativity and innovation: ‘It’s the exact opposite: Privacy breeds innovation and prosperity, and it will give you a competitive advantage. It allows you to start with a base of trust, which steadily enhances the growth of your customers and their loyalty. Make it a win/win proposition!

Ann Cavoukian has recently launched Global Privacy and Security by Design: GPSbyDesign.org, an International Council on Global Privacy and Security. For more information on Ann Cavoukian, please go to Privacy by Design Centre of Excellence, at Ryerson University.

Hessie Jones is the Founder of ArCompany advocating AI readiness, education and the ethical distribution of AI. She is also Director for the International Council, Global Privacy and Security by Design. As a seasoned digital strategist, author, tech geek and data junkie, she has spent the last 18 years on the internet at Yahoo!, Aegis Media, CIBC, and Citi, as well as tech startups including Cerebri, OverlayTV and Jugnoo. Hessie saw things change rapidly when search and social started to change the game for advertising and decided to figure out the way new market dynamics would change corporate environments forever: in process, in culture and in mindset. She launched her own business, ArCompany in social intelligence, and now, AI readiness. Through the weekly think tank discussions her team curated, she surfaced the generational divide in this changing technology landscape across a multitude of topics. Hessie is also a regular contributor to Towards Data Science on Medium and Cognitive World publications.

This article solely represents my views and in no way reflects those of DXJournal. Please feel free to contact me h.jones@arcompany.co

You may like

-

Meta ‘supreme court’ takes on cases of deepfake porn

-

Race for AI isn’t zero-sum, says Amazon cloud boss

-

Japan seeks to reclaim tech edge with overseas help

-

Microsoft to invest $2.9 bn in Japan AI push

-

Podcast sued for ‘AI George Carlin’ settles with comic’s estate

-

AI vs humans: influencers face competition from virtual models

Business

How businesses can protect themselves from the rising threat of deepfakes

Dive into the world of deepfakes and explore the risks, strategies and insights to fortify your organization’s defences

Published

1 month agoon

March 11, 2024By

Dave Gordon

In Billy Joel’s latest video for the just-released song Turn the Lights Back On, it features him in several deepfakes, singing the tune as himself, but decades younger. The technology has advanced to the extent that it’s difficult to distinguish between that of a fake 30-year-old Joel, and the real 75-year-old today.

This is where tech is being used for good. But when it’s used with bad intent, it can spell disaster. In mid-February, a report showed a clerk at a Hong Kong multinational who was hoodwinked by a deepfake impersonating senior executives in a video, resulting in a $35 million theft.

Deepfake technology, a form of artificial intelligence (AI), is capable of creating highly realistic fake videos, images, or audio recordings. In just a few years, these digital manipulations have become so sophisticated that they can convincingly depict people saying or doing things that they never actually did. In little time, the tech will become readily available to the layperson, who’ll require few programming skills.

Legislators are taking note

In the US, the Federal Trade Commission proposed a ban on those who impersonate others using deepfakes — the greatest concern being how it can be used to fool consumers. The Feb. 16 ban further noted that an increasing number of complaints have been filed from “impersonation-based fraud.”

A Financial Post article outlined that Ontario’s information and privacy commissioner, Patricia Kosseim, says she feels “a sense of urgency” to act on artificial intelligence as the technology improves. “Malicious actors have found ways to synthetically mimic executive’s voices down to their exact tone and accent, duping employees into thinking their boss is asking them to transfer funds to a perpetrator’s account,” the report said. Ontario’s Trustworthy Artificial Intelligence Framework, for which she consults, aims to set guides on the public sector use of AI.

In a recent Microsoft blog, the company stated their plan is to work with the tech industry and government to foster a safer digital ecosystem and tackle the challenges posed by AI abuse collectively. The company also said it’s already taking preventative steps, such as “ongoing red team analysis, preemptive classifiers, the blocking of abusive prompts, automated testing, and rapid bans of users who abuse the system” as well as using watermarks and metadata.

That prevention will also include enhancing public understanding of the risks associated with deepfakes and how to distinguish between legitimate and manipulated content.

Cybercriminals are also using deepfakes to apply for remote jobs. The scam starts by posting fake job listings to collect information from the candidates, then uses deepfake video technology during remote interviews to steal data or unleash ransomware. More than 16,000 people reported that they were victims of this scam to the FBI in 2020. In the US, this kind of fraud has resulted in a loss of more than $3 billion USD. Where possible, they recommend job interviews should be in person to avoid these threats.

Catching fakes in the workplace

There are detector programs, but they’re not flawless.

When engineers at the Canadian company Dessa first tested a deepfake detector that was built using Google’s synthetic videos, they found it failed more than 40% of the time. The Seattle Times noted that the problem in question was eventually fixed, and it comes down to the fact that “a detector is only as good as the data used to train it.” But, because the tech is advancing so rapidly, detection will require constant reinvention.

There are other detection services, often tracing blood flow in the face, or errant eye movements, but these might lose steam once the hackers figure out what sends up red flags.

“As deepfake technology becomes more widespread and accessible, it will become increasingly difficult to trust the authenticity of digital content,” noted Javed Khan, owner of Ontario-based marketing firm EMpression. He said a focus of the business is to monitor upcoming trends in tech and share the ideas in a simple way to entrepreneurs and small business owners.

To preempt deepfake problems in the workplace, he recommended regular training sessions for employees. A good starting point, he said, would be to test them on MIT’s eight ways the layperson can try to discern a deepfake on their own, ranging from unusual blinking, smooth skin, and lighting.

Businesses should proactively communicate through newsletters, social media posts, industry forums, and workshops, about the risks associated with deepfake manipulation, he told DX Journal, to “stay updated on emerging threats and best practices.”

To keep ahead of any possible attacks, he said companies should establish protocols for “responding swiftly” to potential deepfake attacks, including issuing public statements or corrective actions.

How can a deepfake attack impact business?

The potential to malign a company’s reputation with a single deepfake should not be underestimated.

“Deepfakes could be racist. It could be sexist. It doesn’t matter — by the time it gets known that it’s fake, the damage could be already done. And this is the problem,” said Alan Smithson, co-founder of Mississauga-based MetaVRse and investor at Your Director AI.

“Building a brand is hard, and then it can be destroyed in a second,” Smithson told DX Journal. “The technology is getting so good, so cheap, so fast, that the power of this is in everybody’s hands now.”

One of the possible solutions is for businesses to have a code word when communicating over video as a way to determine who’s real and who’s not. But Smithson cautioned that the word shouldn’t be shared around cell phones or computers because “we don’t know what devices are listening to us.”

He said governments and companies will need to employ blockchain or watermarks to identify fraudulent messages. “Otherwise, this is gonna get crazy,” he added, noting that Sora — the new AI text to video program — is “mind-blowingly good” and in another two years could be “indistinguishable from anything we create as humans.”

“Maybe the governments will step in and punish them harshly enough that it will just be so unreasonable to use these technologies for bad,” he continued. And yet, he lamented that many foreign actors in enemy countries would not be deterred by one country’s law. It’s one downside he said will always be a sticking point.

It would appear that for now, two defence mechanisms are the saving grace to the growing threat posed by deepfakes: legal and regulatory responses, and continuous vigilance and adaptation to mitigate risks. The question remains, however, whether safety will keep up with the speed of innovation.

Dave is a journalist whose work has appeared in more than 100 media outlets around the world, including BBC, National Post, Washington Times, Globe and Mail, New York Times, Baltimore Sun.

Business

The new reality of how VR can change how we work

It’s not just for gaming — from saving lives to training remote staff, here’s how virtual reality is changing the game for businesses

Published

1 month agoon

March 7, 2024

Until a few weeks ago, you might have thought that “virtual reality” and its cousin “augmented reality” were fads that had come and gone. At the peak of the last frenzy around the technology, the company formerly known as Facebook changed its name to Meta in 2021, as a sign of how determined founder Mark Zuckerberg was to create a VR “metaverse,” complete with cartoon avatars (who for some reason had no legs — they’ve got legs now, but there are some restrictions on how they work).

Meta has since spent more than $36 billion on metaverse research and development, but so far has relatively little to show for it. Meta has sold about 20 million of its Quest VR headsets so far, but according to some reports, not many people are spending a lot of time in the metaverse. And a lack of legs for your avatar probably isn’t the main reason. No doubt many were wondering: What are we supposed to be doing in here?

The evolution of virtual reality

Things changed fairly dramatically in June, however, when Apple demoed its Vision Pro headset, and then in early February when they were finally available for sale. At $3,499 US, the device is definitely not for the average consumer, but using it has changed the way some think about virtual reality, or the “metaverse,” or whatever we choose to call it.

Some of the enhancements that Apple has come up with for the VR headset experience have convinced Vision Pro true believers that we are either at or close to the same kind of inflection point that we saw after the release of the original iPhone in 2007.Others, however, aren’t so sure we are there yet.

The metaverse sounds like a place where you bump into giant dinosaur avatars or play virtual tennis, but ‘spatial computing’ puts the focus on using a VR headset to enhance what users already do on their computers. Some users generate multiple virtual screens that hang in the air in front of them, allowing them to walk around their homes or offices and always have their virtual desktop in front of them.

VR fans are excited about the prospect of watching a movie on what looks like a 100-foot-wide TV screen hanging in the air in front of them, or playing a video game. But what about work-related uses of a headset like the Vision Pro?

Innovating health care with VR technology

One of the most obvious applications is in medicine, where doctors are already using remote viewing software to perform checkups or even operations. At Cambridge University, game designers and cancer researchers have teamed up to make it easier to see cancer cells and distinguish between different kinds.

Heads-up displays and other similar kinds of technology are already in use in aerospace engineering and other fields, because they allow workers to see a wiring diagram or schematic while working to repair it. VR headsets could make such tasks even easier, by making those diagrams or schematics even larger, and superimposing them on the real thing. The same kind of process could work for digital scans of a patient during an operation.

Using virtual reality, patients and doctors could also do remote consultations more easily, allowing patients to describe visually what is happening with them, and giving health professionals the ability to offer tips and direct recommendations in a visual way.

This would not only help with providing care to people who live in remote areas, but could also help when there is a language barrier between doctor and patient.

Impacting industry worldwide

One technology consulting firm writes that using a Vision Pro or other VR headset to streamline assembly and quality control in maintenance tasks. Overlaying diagrams, 3D models, and other digital information onto an object in real time could enable “more efficient and error-free assembly processes,” by providing visual cues, step-by-step guidance, and real-time feedback.

In addition to these kinds of uses, virtual reality could also be used for remote onboarding for new staff in a variety of different roles, by allowing them to move around and practice training tasks in a virtual environment.

Some technology watchers believe that the retail industry could be transformed by virtual reality as well. Millions of consumers have become used to buying online, but some categories such as clothing and furniture have lagged, in part because it is difficult to tell what a piece of clothing might look like once you are wearing it, or what that chair will look like in your home. But VR promises the kind of immersive experience where that becomes possible.

While many consumers may see this technology only as an avenue for gaming and entertainment, it’s already being leveraged by businesses in manufacturing, health care and workforce development. Even in 2020, 91 per cent of businesses surveyed by TechRepublic either used or planned to adopt VR or AR technology — and as these technological advances continue, adoption is likely to keep ramping up.

Mathew Ingram is a veteran journalist and technology writer whose work focuses on the intersection between media, technology and culture. He is endlessly fascinated by the changes that the internet and the mobile and social web have produced — and are continuing to produce — in the way that we behave towards each other, the way we consume information and the way we see the world around us.

Business

5 tips for brainstorming with ChatGPT

How to avoid inaccuracy and leverage the full creative reign of ChatGPT

Published

2 months agoon

March 1, 2024By

Veronica Ott

ChatGPT recruited a staggering 100 million users by January 2023. As software with one of the fastest-growing user bases, we imagine even higher numbers this year.

It’s not hard to see why.

Amazon sellers use it to optimize product listings that bring in more sales. Programmers use it to write code. Writers use it to get their creative juices flowing.

And occasionally, a lawyer might use it to prepare a court filing, only to fail miserably when the judge notices numerous fake cases and citations.

Which brings us to the fact that ChatGPT was never infallible. It’s best used as a brainstorming tool with a skeptical lens on every output.

Here are five tips for how businesses can avoid inaccuracy and leverage the full creative reign of generative AI when brainstorming.

- Use it as a base

Hootsuite’s marketing VP Billy Jones talked about using ChatGPT as a jumping-off point for his marketing strategy. He shares an example of how he used it to create audience personas for his advertising tactics.

Would he ask ChatGPT to create audience personas for Hootsuite’s products? Nope, that would present too many gaps where the platform could plug in false assumptions. Instead, Jones asks for demographic data on social media managers in the US — a request easy enough for ChatGPT to gather data on. From there he pairs the output with his own research to create audience personas.

You don’t need ChatGPT to tell you yes or no — even if you learn something new, that doesn’t really get your creative juices flowing. Consider the difference:

- Does history repeat itself?

- What are some examples of history repeating itself in politics in the last decade?

Open-ended questions give you much more opportunity to get inspired and ask questions you may not have thought of.

- Edit your questions as you go

ChatGPT has a wealth of data at its virtual fingertips to examine and interpret before spitting out an answer. Meaning you can narrow down the data for a more focused response with multiple prompts that further tweak its answers.

For example, you might ask ChatGPT about book recommendations for your book club. Once you get an answer, you could narrow it down by adding another requirement, like specific years of release, topic categories, or mentions by reputable reviewers. Adding context to what you’re looking for will give more nuanced answers.

- Gain inspiration from past success

Have an idea you’re unsure about? Ask ChatGPT about successes with a particular strategy or within a particular industry.

The platform can scour through endless news releases, reports, statistics, and content to find you relatable cases all over the world. Adding the word “adapt” into a prompt can help utilize strategies that have worked in the past and apply them to your question.

As an example, the prompt, “Adapt sales techniques to effectively navigate virtual selling environments,” can generate new solutions by pulling from how old problems were solved.

- Trust, but verify

You wouldn’t publish the drawing board of a brainstorm session. Similarly, don’t take anything ChatGPT says as truth until you verify it with your own research.

The University of Waterloo notes that blending curiosity and critical thinking with ChatGPT can help to think through ideas and new angles. But, once the brainstorming is done, it’s time to turn to real research for confirmation.

Veronica Ott is a freelance writer and digital marketer with a specialization in finance and business. As a CPA with experience in the industry, she’s able to provide unique insight into various monetary, financial and economic topics. When Veronica isn’t writing, you can find her watching the latest films!

Featured

-

Business4 months ago

Business4 months agomesh conference goes deep on AI, with experts focusing in on training, ethics, and risk

-

Business4 months ago

Business4 months agoSkill-based hiring is the answer to labour shortages, BCG report finds

-

Events6 months ago

Events6 months agoTop 5 tech and digital transformation events to wrap up 2023

-

People3 months ago

People3 months agoHow connected technologies trim rework and boost worker safety in hands-on industries

-

Events3 months ago

Events3 months agoThe Northern Lights Technology & Innovation Forum comes to Calgary next month